124 Days of Let's Encrypt

Published 2016-04-05

This is a quick status update from the Early Impacts of Let's Encrypt post.

Today marks 124 days since Let's Encrypt's beta started, or 204 days since the Hello World. As of this writing, Let's Encrypt has issued 1.57M certificates, of which 1.31M are still valid.

With that out of the way, the main criticisms against the first round of statistics I published were:

- The data sources were too limited, being only 3 of the 10 known public CT logs.

- I was working from fully-qualified domain names (FQDNs) rather than registered domain names (eTLD+1s), leading to counting variations such as

tacticalsecret.comandwww.tacticalsecret.comas separate entities.

I've remedied these things now; the ct-sql tool stores the "registered domain" metadata in its own table, and I've acquired larger and more powerful computing capacity so that I can operate on the larger datasets.

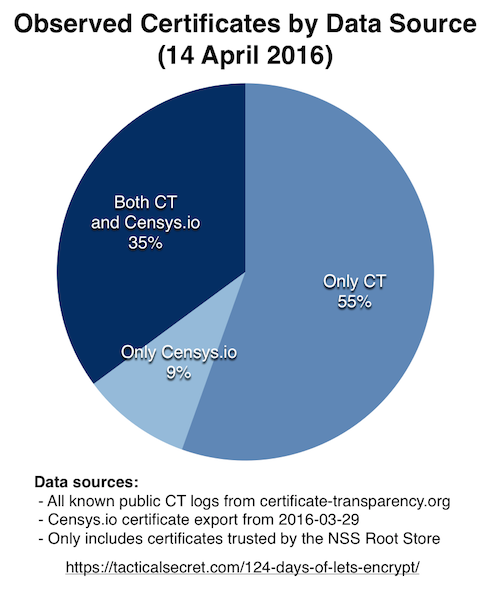

Certificates in Censys vs CT vs Both (Updated 14 April)

Both the percentage, and the absolute number of certificates known by Censys but not in Certificate Transparency logs have decreased. Some of this is undoubtedly because 7 logs left out of the previous dataset were included this time, but I believe a number of those missing certificates have been submitted to logs; I submitted some on a whim, and I have heard rumor that others did the same.

Update 14 April 2016: The query should have been updated when I increased the number of logs; I apologize. The original query, from Early Impacts of Let's Encrypt caused duplicate entries to be counted, showing >28M certificates when in fact, the whole database of CT+Censys contains only 17.7M certificates.

The updates show that only 9% of observed, currently-valid certificates are in Censys.io but not inserted into CT.

The updated query is below:

--

-- Determine the quantity of certificates which are in

-- Censys but not in CT

--

CREATE TEMPORARY TABLE IF NOT EXISTS CTvsCensys SELECT

c.certID,

(ct.certID IS NOT NULL

AND censys.certID IS NULL) AS onlyCT,

(censys.certID IS NOT NULL

AND ct.logID IS NULL) AS onlyCensys,

(ct.logId IS NOT NULL

AND censys.certID IS NOT NULL) AS inBoth

FROM

unexpired_certificate AS c

LEFT JOIN

censysentry AS censys ON censys.certID = c.certID

LEFT JOIN

ctlogentry AS ct ON ct.certID = c.certID

GROUP BY c.certID;

SELECT SUM(onlyCT), SUM(onlyCensys), SUM(inBoth) FROM CTvsCensys;The output is here: CertsInCTversusCensys.tsv

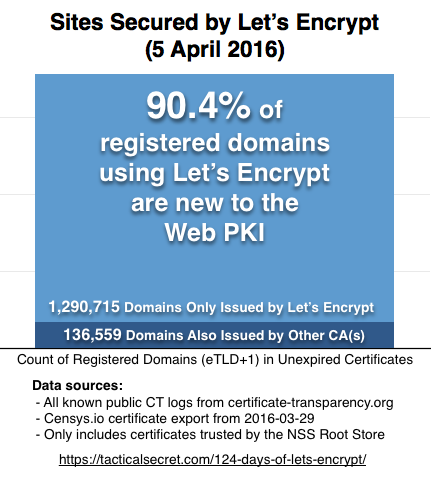

Sites Secured By Other CAs

I've modified this query a bit, both to use the new registereddomain table of eTLD+1 entries, and to use a temporary table to speed up uniqueness calculations.

--

-- Determine how many different companies have issued

-- certificates for a given registered domain. This

-- query starts from a list of all currently-valid

-- certificates from Let's Encrypt.

--

CREATE TEMPORARY TABLE IF NOT EXISTS tmp_domain (domain varchar(255) unique)

SELECT DISTINCT domain FROM le_current_certificate NATURAL JOIN registereddomain;

SELECT

COUNT(*) AS numDomains,

(SELECT

COUNT(DISTINCT companyID)

FROM

registereddomain AS n2

NATURAL JOIN

certificate AS c2

NATURAL JOIN

issuercompany AS ic2

WHERE

n2.domain = n.domain) AS numCompanies

FROM

tmp_domain AS n

GROUP BY numCompanies;The output is here: LERegDomainsIssuedElsewhere.tsv

Technical Updates

In brief, here's what changed in the tooling since the last post:

Censys.io Imports

The ct-sql tool can now take a URL to a Censys.io JSON export, download and import it without having to first write it to disk; helpful when you don't have 100+ GB of scratch space to work with.

[user@vultr ~]$ ct-sql -config ./bin/ct-sql.ini -censysUrl https://path_to/certificates.json

Starting Censys Import, using HTTP Importer

| 0.1% (160841728 of 115300222415) (13h33m0s remaining)The mechanism behind the ct-sql tool handles already-imported certificates cleanly, so I've actually imported several copies of the Censys.io certificate dataset at this point. For the purposes of this exercise though, I'm working from the dataset exported from Censys.io on 2016-03-29.

CT Logs

I've imported all the logs, and have a Systemd timer keep them up to date. The log list is available in JSON format at https://www.certificate-transparency.org/known-logs/log_list.json, so a bit of kludgy scripting means the logs in use for my statistics now are:

https://log.certly.io

https://ct.izenpe.com

https://ct.googleapis.com/pilot

https://ct.googleapis.com/rocketeer

https://ct.googleapis.com/aviator

https://ct.ws.symantec.com

https://ct.wosign.com

https://ct1.digicert-ct.com/log

https://ctlog.api.venafi.com

https://vega.ws.symantec.comCompany Mapping

Most certificate authorities use many PKI roots to conduct their business. The ct-sql tool only knows about particular roots, not companies, so I made a rough mapping of roots-to-companies. It's very rough, and uses name matching rather than something more stable like authority Key IDs.

Anyway, it's a thing. You can see it here: IssuerToCompanyMap.sql

The result lets you roughly connect issuers to companies with a SELECT FROM unexpired_certificate NATURAL JOIN issuercompany GROUP BY companyid kind of approach.

Other things

There's rates and ETAs now on operations, so you can have an idea of how long your network will be saturated on an import. Or see that you're getting rate limited heavily by a particular CT log.

If you want to keep full DER-encoded certificates around, the tool can now write them in a folder structure on disk for you, 1024 certs per subfolder. They're indexed by the internal Cert ID from the database.

I've put them on disk this way, separate from the relational DB, as a cheap way to avoid worrying about maintaining indices and accidental caching. Also, this stuff gets big quickly, so it's nice to be able to use separate S3 volumes for these... or just delete the oldest ones using find.

Next Steps

I'm planning to spend some time this month reworking Let's Encrypt's stats page. Most of that work will be producing a stats-producing utility in Boulder itself, which the Let's Encrypt ops folks can run a few times a day, uploading the results to an outside datacenter. Once that's in place, I'll be working on a rewrite of the stats page itself, slicing the data in more interesting ways than it currently is.

Ideas for what to produce are all welcome; feel free to ping me on Twitter.